I have been testing Analytics for the past 10+ years. In the initial days, it was very painful and error-prone, as I was doing this manually. Over the years, as I understood this niche area better, and spent time understanding the reason and impact of data Analytics on any product and business, I started getting smarter about how to test analytics events well.

This post will focus on how to test Analytics for Mobile apps (Android / iOS), and also answer some questions I have gotten from the community regarding the same.

Table of contents

- What is Analytics?

- Why is Analytics important?

- How do teams use Analytics?

- How to implement Analytics in your product?

- What is an Analytics Event?

- Different ways to test Analytics events

- Automating Analytics Events

- Differences in Analytics for Mobile Apps Vs Web sites

- A Comprehensive System / end-2-end Test Automation Solution

- Answers to questions from community

What is Analytics?

Analytics is the “air your product breathes”. Analytics allows teams to:

- Know their users

- Measure outcome and value

- Take decisions

Why is Analytics important?

Analytics allows the business team and product team to understand how well (or not) the features are being used by the users of the system. Without this data, the team would (almost) be shooting in the dark for the ways the product needs to evolve.

The analytics information is critical data to understand where in the feature journeys the user “drops off” and then the inference will provide insights if the drop is because of the way the features have been designed, or if the user experience is not adequate, or of course, there is a defect in the way the implementation has been done.

How do teams use Analytics?

For any team to know how their product is used by the users, you need to instrument your product so that it can share with you meaningful (non-private) information about the usage of your product. From this data, the team would try to infer context and usage patterns which would serve as inputs to make the product better.

The instrumentation I refer to above is of different types.

This can be logs sent to your servers – typically these are technical information about the product.

Another form of instrumentation would be analytics events. These capture the nature of interaction and associated metadata, and send that information to (typically) a separate server / tool. This information is sent asynchronously and does not have any impact on the functioning, nor performance of the product.

This is typically a 4 step process:

- Capture

- You need to know what data you want, and why.

- Implement the capturing of data based on specific user action(s)

- Collect

- The captured data needs to be collected in a central server.

- There are many Analytics tools available (commercial & open-source)

- Many organizations end up building their own tool based on specific customisations / requirements

- Prepare data for Analysis

- The collected data needs to be analysed and put in context to make meaning

- Report

- Based on the context of the analysed data, reports would be generated that show patterns with details and reasons

- This allows teams to evolve the product in better ways for business and their users

How to implement Analytics in your product?

Once you know what information you want to capture and when, implementing Analytics into your product goes through the same process as for your regular product features & functionalities.

Implementing Analytics

Step 1: Embed Analytics library

The analytics library is typically a very light-weight library, and is added as a part of your web pages or your native apps (android or iOS).

Step 2: Trigger the event

Once the library is embedded in the product, whenever the user does any specific, predetermined actions, the front-end client code would capture all the relevant information regarding the event, and then trigger a call to the analytic tool being used with that information.

Ex: Trigger an analytics event when user “clicks on the search button”

The data in the triggered event can be sent in 2 ways:

- As part of query parameters in the request.

- As part of the POST body in the request. This is a preferred approach if the data to be sent is large.

What is an Analytics Event?

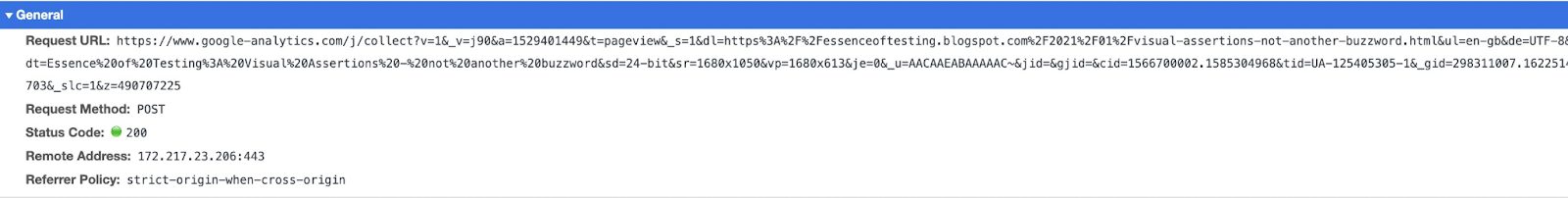

An analytics event is a simple https request sent to the Analytics tool(s) your product uses. Yes, your product may be using multiple tools to capture and visualise different types of information.

Below is an example of an analytics event.

Let’s dissect this call to understand what it is doing:

- The request in the above example is from my blog, which is using Google Analytics as the tool to capture and understand the readers of my blog.

- The request itself is a straightforward https call to the “collect” API endpoint.

- The real information, as shown in this call, is all the query parameters associated with the request.

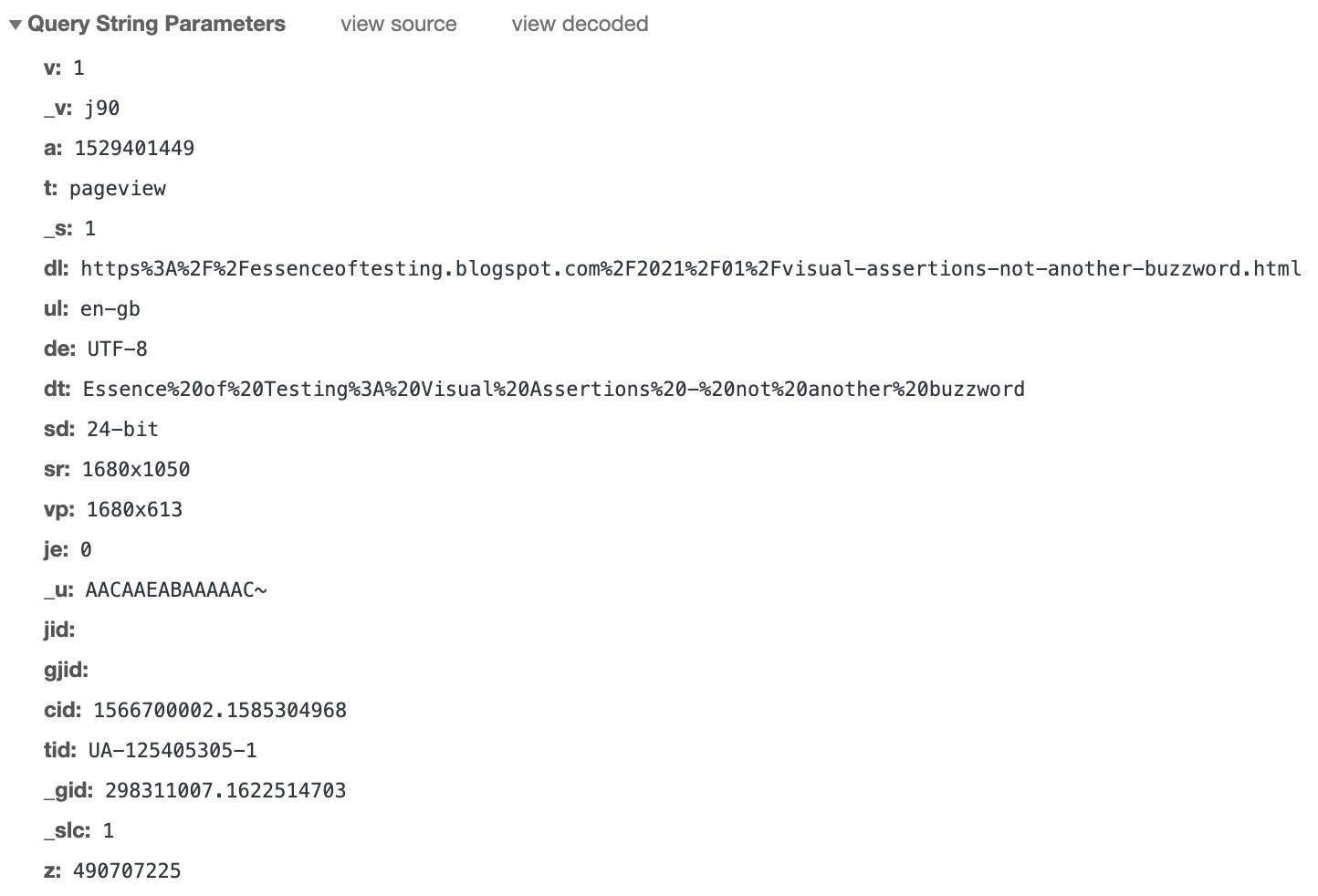

- For the above request, here is a closer look at the query parameters

- The query parameters are the collection of information captured to understand what the user (reader of my blog) did.

- The name-value pairs of query parameters may seem cryptic – and that is not wrong. It is probably designed in this fashion for the following reasons:

- To reduce the packet size of these requests – which reduces network load, and eventual processing load on the analytics tool as well

- To try and mask what information is being captured. This probably was more relevant in the http days. Ex: “dnt=1” may indicate that the user has set preferences for “do-not-track=true”

- The mapping is created based on the analytic tool

- Even if the request is sent as part of the POST body, it would have similar payload

- When the request reaches the analytic tool, the tool processes each request based on the mapping it had created, and creates the reports and charts based on what information was received by it

Different ways to test Analytics events

There are different ways to test Analytics events. Let’s understand the same.

Test at the source

Well, if testing the end report is too late, then we need to shift-left and test at the source.

During Development

Based on requirements, the (front-end) developers would be adding the analytics library to the web pages or native apps. Then they set the trigger points when the event should be captured and sent to the analytics tool.

A good practice is for the analytics event generation and trigger to be implemented as a common function / module, which will be called by any functionality that needs to send an analytics event.

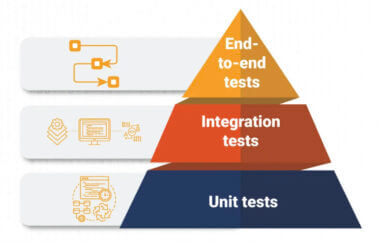

This will allow the developers to write unit tests to ensure:

- All the functionalities that need to trigger an event are collecting the correct and expected data (which will be converted to query parameters) to be sent to the common module

- The event generation module is working as expected – i.e. the request is created with the right structure and parameters (as received from its callers)

- The event can be sent / triggered with the correct structure and details as expected

This approach will ensure that your event triggering and generation logic is well tested. Also, these tests will be able to be run on developer machines as well as your build pipelines / jobs in your CI (Continuous Integration) server. So you get quick feedback in case anything goes wrong.

During Manual / Exploratory Testing

While the unit testing is critical to ensure all aspects of the code works as expected, the context of dynamic data based on real users is not possible to understand from the unit tests. Hence, we also need the System Tests / End-2-End tests to understand if analytics is working well.

Reference: https://devrant.com/rants/754857/when-you-write-2-unit-tests-and-no-integration-tests

Let’s look at the details of how you can test Analytics Events during Testing in any of your internal testing environments:

- Requirements for testing

- You need the ability to capture / see the events being sent from your browser / mobile app

- For Browsers, you can simply refer to the Network tab in the Developer Tools

- For Native Apps, set up a proxy server on your computer, configure the device to route the traffic through the proxy server. Now launch the app and perform actions / interact with the functionality. All API requests (including Analytics event requests) will be captured in the Proxy server on your computer

- Based on the types of actions performed by you in the browser or the native app, you will be able to verify the details of those requests from the Network tab / Proxy server.

The details include – name of the event, and the details in the query parameter

This step is very important, and different from what your unit tests are able to validate. With this approach, you would be able to verify:

- Aspects like dynamic data (in the query parameters)

- If any request is repeated / duplicated

- Whether any request is not getting triggered from your product

- If requests get triggered on different browsers or devices

All the above is possible to be tested and verified even if you do not have the Analytic tool setup or configured as per business requirements.

The advantage of this approach is that it complements the unit testing, and ensures that your product is behaving as expected in all scenarios.

The only challenge / disadvantage of this aspect is that this is manual testing. Hence, it is very possible to miss out certain scenarios or details to be validated on every manual test cycle. Also, it is impossible to scale and repeat this approach.

As part of Test Automation

Hence, we need a better approach. The way unit tests are automated, the above activity of testing should also be automated. The next section talks about a solution for how you can automate testing of Analytics events as part of your System / end-2-end test automation.

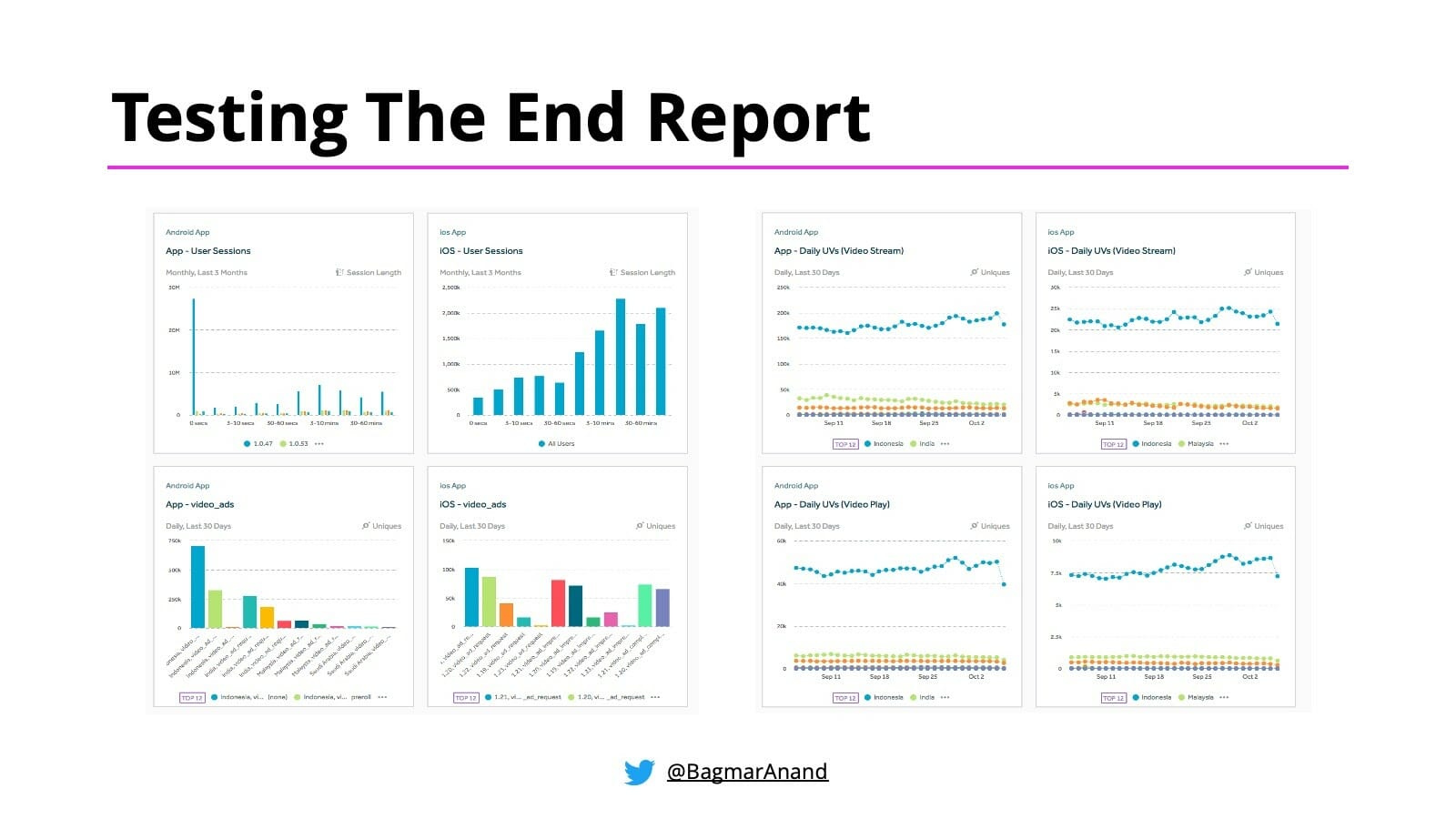

Test the end-report

This is unfortunately the most common approach teams take to test if the analytics events are being captured correctly, and that too may end up happening in production / or when the app is released for its users. But you need to test early. Hence the above technique of Testing at the source is critical for the team to know if the events are been triggered and validated as soon as the implementation is completed.

I would recommend this strategy after you have completed Testing at the Source!

There are pros and cons of this approach.

The biggest disadvantage though of the above approach is that it is too late!

That said, there is still a lot of value in doing this. This indicates that your Analytics tool is also configured correctly to accept the data and you are actually able to set up meaningful charts and reports that can indicate patterns and allows you to identify and prioritise the next steps to make the product better.

Automating Analytics Events

Let’s look at the approach to automate testing of Analytics events as part of your System / end-2-end Test Automation.

We will talk separately about Web & Mobile – as both of them need a slightly different approach.

Web

Assumptions

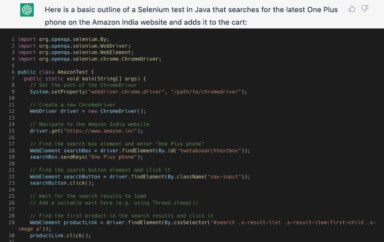

- The below technique assumes you are using Selenium WebDriver for your System / end-2-end automation. But you could implement a similar solution based on any other tools / technologies of your choice.

Prerequisites

- You already have System / end-2-end test automated using Selenium Webdriver

- For each System / end-2-end test automated, have a full list of the Analytics events that are expected to be triggered, with all the expected query parameters (name & value)

Integrating with Functional Automation

There are 2 options to accomplish the Analytics event test automation for Web. They are as follows:

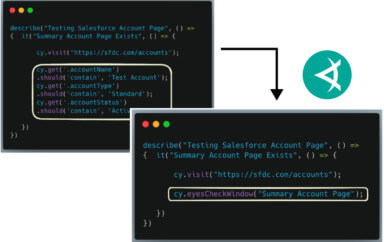

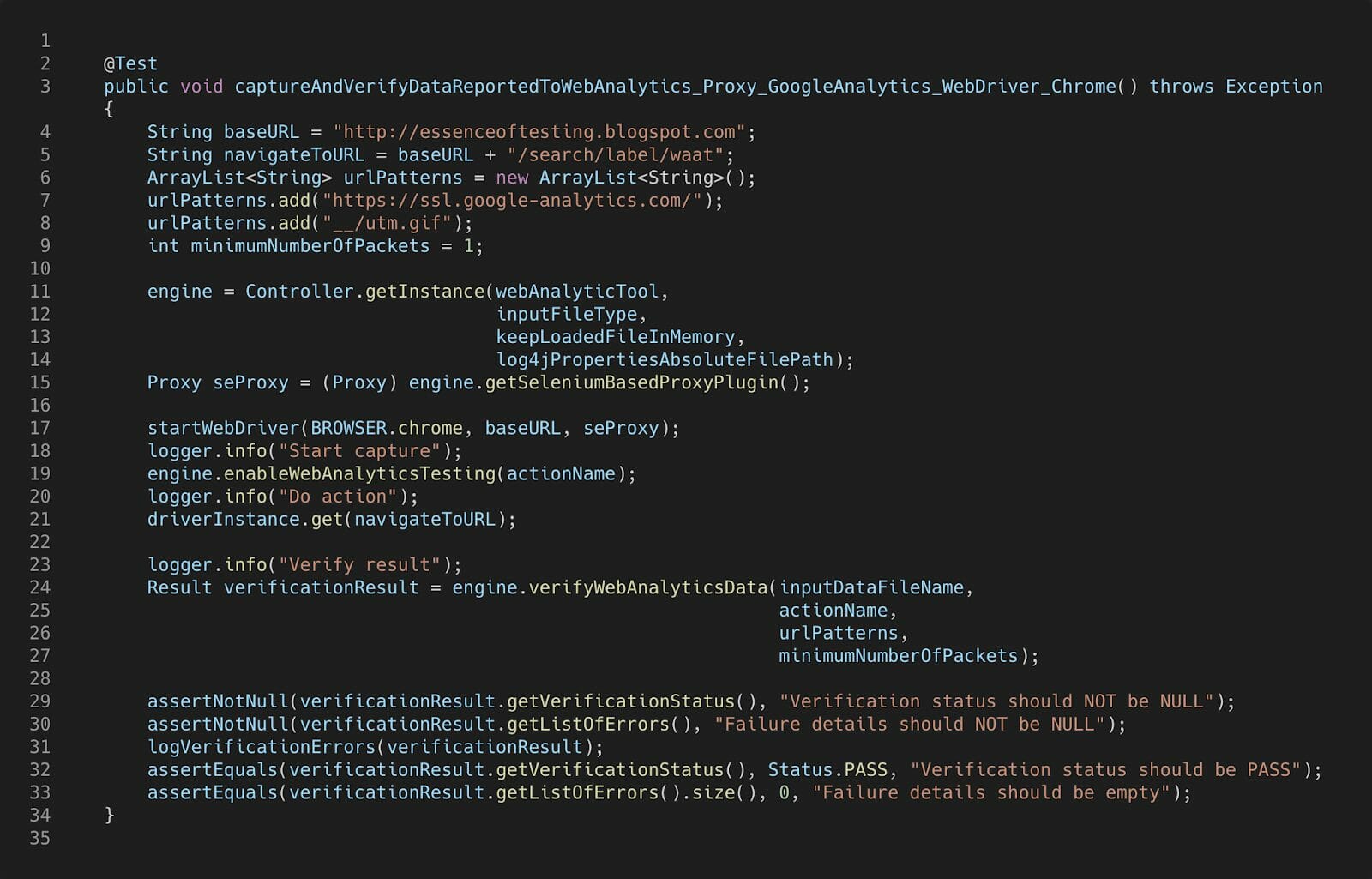

- Use WAAT

I built WAAT – Web Analytics Automation Testing in Java & Ruby back in 2010. Integrate this in your automation framework using the instructions in the corresponding github pages.

Here is an example of how this test would look using WAAT.

This approach will let you find the correct request and do the appropriate matching of parameters automatically.

- Selenium 4 (beta) with Chrome Developer Protocol

With Selenium 4 almost available, you could potentially use the new APIs to query the network requests from Chrome Developer Protocol.

With this approach, you will need to write code to query the appropriate Analytics request from the list of requests captured, and compare the actual query parameters with what is expected.

That said, I will be working on enhancing WAAT to support Chrome Developer Protocol based plugin in the near future. Keep an eye out for updates to the WAAT project in the near future.

Mobile (Android & iOS)

Assumptions

- The below technique assumes you are using Appium for your System / end-2-end automation. But you could implement a similar solution based on any other tools / technologies of your choice.

Prerequisites

- You already have System / end-2-end test automated using Appium

- For each System / end-2-end test automated, have a full list of the Analytics events that are expected to be triggered, with all the expected query parameters (name & value)

Integrating with Functional Automation

There are 2 options to accomplish the Analytics event test automation for Mobile apps (Android / iOS). They are as follows:

- Use WAAT

As described for the web, you can integrate WAAT – Web Analytics Automation Testing in your automation framework using the instructions in the corresponding github pages.

On the device where the test is running, you would need to do the following additional setup as described in the Proxy setup for Android device

This approach will let you find the correct request and do the appropriate matching of parameters automatically.

- Instrument app

This is a customized implementation, but can work great in some contexts. This is what you can do:

- Taking developer help, instrument the app to add the analytics events as a log message, in a clear and easily identifiable way

- For each System / end-2-end test you run, follow these steps

- Have a list of expected analytics events with query parameters (in sequence) for this test

- Clear the logs on the device

- Run the System / end-2-end test

- Retrieve the logs from the device

- Retrieve all the analytics events that would be added to the logs while running the System / end-2-end tests

- Compare the actual analytics events captured with the expected results

This approach will allow us to validate events as they are being sent as a result of running the System / end-2-end tests.

Differences in Analytics for Mobile Apps Vs Web sites

As you may have noticed in the above sections for Web and Mobile, the actual testing of Analytics events is really the same in either case. The differences arise a little about how to capture the events, and maybe some proxy setup required.

There is another aspect that is different for Analytics testing for Mobile.

The Analytics tool sdk / library that is added to the Mobile app has an optimising feature – batching! This configurable feature (in most tools) allows customizing the number of requests that should be collected together. Once the batch is full, or on trigger of some specific events (like closing the app), all the events in the batch will be sent to the Analytics tool and then cleared / reset.

This feature is important for mobile devices, as the users may be on the move, (or using the apps in Airplane mode) and may not have internet connectivity when using the app. In such cases, if the device does not cache the analytics requests, then that data may be lost. Hence it is important for the app to store the analytics events and then send it at a later point when there is connectivity available.

Also, another reason batching of analytics events helps is to minimize the network traffic generated by the app.

So when we are doing the Mobile Analytics events automation, when the test completes, ensure the events are triggered from the app (i.e. from the batch), only then it will be seen in the logs or proxy server, and then validation can be done.

While batching can be a problem for Test Automation (since the events will not be generated / seen immediately), you could take one of these 2 approaches to make your tests deterministic:

- Configure the batch size to be 1, or turn of batching to enable triggering the events immediately. This can be done for your apps for the non-prod environments or as part of debug builds.

- Trigger the flushing of the batch through an action in the app (ex. Closing / minimizing the app). Talk to the developers to understand what actions will work for your app.

A Comprehensive System / end-2-end Test Automation Solution

I like to have my System Tests / end-2-end Test Automation solution to have the following capabilities built in:

- Ability to run tests on multiple platforms (web and native mobile – android & iOS)

- Run tests in parallel

- Tests manage their own test data

- Rich reporting

- Visual Testing using Applitools Visual AI

- Analytics Events validation

See this post on Automating Functional / End-2-End Tests Across Multiple Platforms for implementation details for building a robust, scalable and maintainable cross-platform Test Automation Framework

Answers to questions from community

- How do you add the automated event tests on Android and iOS to the delivery pipeline?

- If you have automated the tests for Mobile using either of the above approaches, the same would work from CI as well. Of course, the CI agent machines (where the tests will be running) would need to have the same setup as discussed here.

- How do you make sure that new and old builds are working fine?

- The expected analytics events are compared with every new build / app generated. Any difference found there will be highlighted as part of your System / end-2-end test execution

- Is UI testing mandatory to do event testing?

- There are different levels of testing for Analytics. Refer to the Test at the source section. Ask yourself the question – what is the risk to the business team IF any set of events does not work correctly or relevant details are not captured? If there is a big risk, then it is advisable to do some form of System / end-2-end test automation and integrate Analytics automation along with that.

- Any suggestions on a shift-right approach here?

- We should actually be shifting left. Ensure Analytics requirements are part of the requirements, and build and test this along with the actual implementation and testing to prevent surprises later.

- How do we make sure everything is working fine in Production? Should we consider an alerting mechanism in case of sudden spike or loss of events?

- You could have a smoke test suite that runs against Production. These tests can validate functionality and analytics events.

- Regarding the alerts, it is always good to have these setup. The alerts would depend on the Analytics tool that you are using. That said, the nature of alerts would depend on specific functionality of the application-under-test.

- What happens when there are a lot of events to be automated? How do you prioritize?

- Take help from your product team to help prioritise. While all events are important, not all are critical. Do cost / value analysis and based on that, start.

- Testing at source means only UI testing or native testing? You mentioned about a debug app file, so is it possible to automate the events with native frameworks like espresso and XCUITest or only with Appium?

- There are 2 aspects of testing at the source – development & testing. Based on this understanding, figure out what unit testing can be done, and what will trigger the tests in context of an integrated testing. If your automated tests using either espresso or XCUITest can simulate the user actions, which will in-turn trigger the events from the app when the test runs, then you can do Analytics automation at that level as well.

- Once the events are sent to the Analytics tool, the data would be stored in the database. How do you ensure that events are saved in the database? Did you have any other end to end tests to verify that? How do we make sure that? Verifying the network logs alone doesn’t guarantee that events will be dispatched to database

- The product / app does not write the events to the database.

- You are testing your product, and not the Analytics tool

- The app makes an https call to send the event with details to an independent Analytics server – which chooses to put this in some data store, in their defined schema.

- This aspect in most likelihood will be transparent to you.

- Also, in most cases, no one will have access to the Analytics tools’s data store directly. So it does not make sense to verify the data is there in the database.

- Another thing to consider – you / the team would be choosing the Analytics tool based on the features it offers, its reliability and stability. So you should not be needing to “test” the Analytics tool, but instead, focus on the integration to ensure everything your team is building, is tested well.

- So my suggestion is:

- Test at the source (unit tests + System / end-2-end tests), for each new build

- Test the end report to ensure final integration is working well, in test and production

- The product / app does not write the events to the database.

- Sometimes we make use of 3rd party products like https://segment.com/ to segregate events to different endpoints. As a result sometimes only a subset of the events (basis business rules / cost optimizations) might reach the target endpoint. How to manage these in an automation environment?

- Same answer as above.