Over the past two years, Applitools has hosted a series of code battles called Let the Code Speak. We pitted the most popular web testing frameworks against each other through rounds of code challenges, and we invited the audience to vote for which did them best. Now, to cap off the series, we hosted a panel of test automation experts to share their real-world experiences working with these web test frameworks. We called it Cypress, Playwright, Selenium, or WebdriverIO? Let the Engineers Speak!

Here were our panelists and the frameworks they represented:

- Gleb Bahmutov (Cypress) – Senior Director of Engineering at Mercari US

- Carter Capocaccia (Cypress) – Senior Engineering Manager – Quality Automation at Hilton

- Tally Barak (Playwright) – Software Architect at YOOBIC

- Steve Hernandez (Selenium) – Software Engineer in Test at Q2

- Jose Morales (WebdriverIO) – Automation Architect at Domino’s

I’m Andrew Knight – the Automation Panda – and I moderated this panel.

In this blog series, we will recap what these experts had to say about the biggest challenges they face, their favorite integrations, and if they have ever considered changing their test framework. In this article, we’ll kick off the series by introducing each speaker with an overview of their projects in their own words.

Gleb’s Cypress Project at Mercari US

Gleb:

I’m in charge of the web automation group; we test our web application. Mercari US is an online marketplace where you can list an item, buy items, ship [items], and so on. I joined about a year and a half [ago], and my goal was to make it work so it doesn’t break.

There were previous attempts to automate web application testing that were not very successful, and they hired me specifically because I brought Cypress experience. Before that, I worked with Cypress for years, so I’m kind of partial. So you can kind of guess which framework I chose to implement end-to-end tests.

We currently run about 700 end-to-end tests every day multiple times per feature with each pull request. On average, our flake – you know, how many tests kind of pass or fail per test run – is probably half a percent, maybe below that. So we actually invested a lot of time in making sure it’s all green and working correctly. So that’s my story.

Carter’s Cypress Project at Hilton

Carter:

Nice to meet you all today and thank you for the opportunity to speak with everybody. So I need to lead off with a little bit of a disclaimer here. Hilton does not necessarily endorse or use any of the products you may speak of today, and all the opinions that I’m going to talk about are my own.

So Hilton is a large corporation. We operate 7,000 properties in 122 countries. So when we talk about some of the concerns that I face as a quality automation engineer, we’re talking about accessibility, localization, analytics, [and] a variety of application architectures. We might have some that are server-side rendered. We might have some that are CMS-driven pages and even multiple types of CMS. We also have, you know, things that are like that filter out bot traffic. We may use mock data, live data. We have availability engines, pricing engines. So things get pretty complex and therefore our strategy has to follow this complexity in a way that makes things simple for us to interpret results and act upon them quickly.

To give you guys an idea of the scale here, we typically are much like Gleb in this regard, where every single one of our changes runs all of our tests. So we are constantly executing tests all of the time. I think this year – I was just running these reports the day to figure out for, you know, interview review stuff – I think I pulled up that we had so far in this year run about 1.2 million tests on our applications. That’s quite a large quantity. So, you know, if anything, that speaks to our architecture – [it] has some stability built into it – the fact that we were even able to execute that quantity of tests.

But yeah, I’m a Cypress user. I’ve been a Cypress user now for about three years. Much like Gleb, I got faced with the challenge of, “Well, this only runs in Chrome. It’s a young company, young team.” And yeah, I think, you know, we stuck with it, and obviously today Cypress has grown with us and we’re really, really happy to still be using the tool. We were a Webdriver shop before, so I’m really interested to see what Jose is doing. And there probably is still some Webdriver code hanging around here at Hilton. But yeah, I’m really excited to be here today, so thank you for the opportunity.

Tally’s Playwright Project at YOOBIC

Tally:

I work at YOOBIC. YOOBIC is a digital platform for frontline employees – that’s a way to say people like sellers in shops. And they use our application in their daily job – for the tasks they need to do, for their training, for their communication needs, and so on.

For this application to be useful, we have a very rich UI that we are using. And for that, we have decided to use web components. We’re using extensive JS and building web components. This is important because when we tried to automate the testing, this was one of the biggest barriers that we had to cross.

We used to work with WebdriverIO, which was good to some extent. But the thing that really was problematic for us was the shadow DOM support – and shadow root. So I got into the habit for once every fortnight or monthly to go into the web and just Google “end-to-end testing shadow DOM support” and voila! In one of these Googlings, it actually worked out and I stumbled upon Playwright – and it said, “Yes, we have Shadow DOM support.” So I went like, “Okay, I have to try that.” And I just looked at the documentation. It was like shadow DOM was no biggie. It was just like built/baked in naturally. I started testing it, and it worked like magic.

That was almost two and a half years ago. Playwright had a lot less than what it has today. It was still on version 1.2 or something. At the time, it was only the browser automation side; it didn’t have the test runner. And our tests were already written in Cucumber, and Cucumber is a BDD-style kind of testing. So this is the way we actually describe our functional test. So user selects mission, and then user starts, and so on – this is the test. We already had that from WebdriverIO, and basically we just changed the automation underneath to work with Playwright instead of Selenium WebdriverIO.

And this is still how we work. We are using Cucumber for running those tests. We use Playwright as the browser automation. We have our tested app and we are testing against server and the database. We have about 200 scenarios. We run them on every PR. It takes about 20 or 25 minutes with sharding, but this is because they are really long tests.

It also solved a lot of other problems that we had previously, like the fact that you can open multiple browsers. Previously what we were doing, if you wanted to test multiple users, we would log in, log out, and log in with the other user. And here, all of a sudden, we could just spawn multiple windows and do that.

And one [additional] thing that I want to mention besides the functional test, this end-to-end test, we also run component tests. This is different; we are using the Playwright Test Runner, in fact. This is because we work with Angular, and Playwright component testing is not supported with Angular yet. We are using Storybook, and we test with Playwright on top of Storybook. So yeah, that’s my story.

Steve’s Selenium Project at Q2

Steve:

I actually started as a full stack developer at Precision Lender. We’re part of Q2, which is a big FinTech company. If you had priced any skyscrapers or went to get a loan for a skyscraper, there’s a very good chance that our software was used recently – I’m sure you’re all doing that.

Working on the front end, it’s a JavaScript-based application using Knockout but increasingly Vue. I worked over in that for about two and a half years at the company, and I saw the cool stuff that Andy and our other Andy were doing. And I was excited about it. I wanted to move over [and] see what it was all about. And I believe strongly in the power of test to not only improve the quality of the application, but also just the software development lifecycle and the whole experience for everyone and the developers.

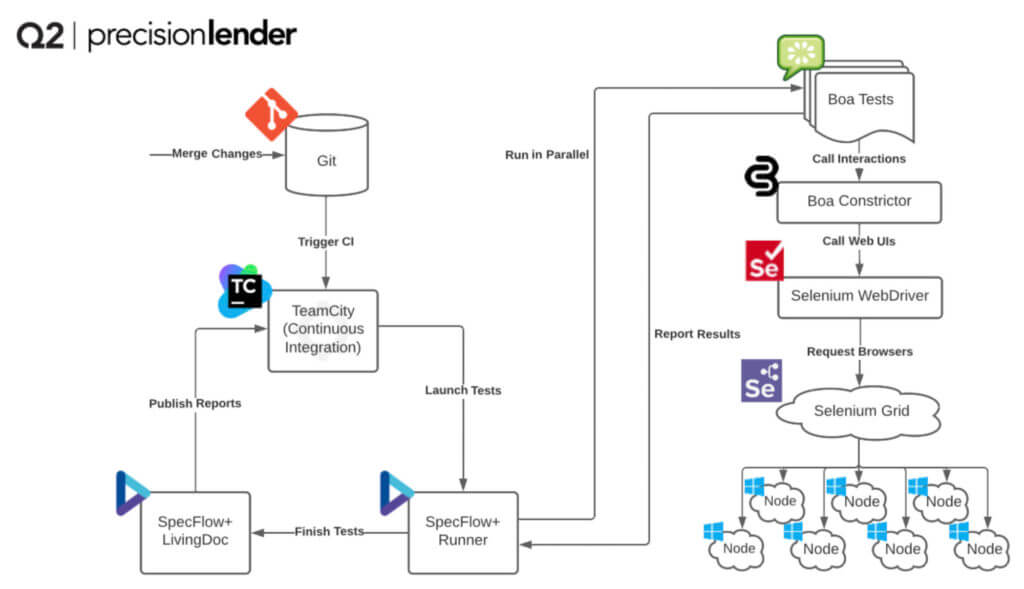

So what you guys have in front of you is a typical CI pipeline that we use. We use Git and we use TeamCity. And on a typical day, we run about 20,000 tests. We have about 6,000 unique tests and upon one commit to our main developed branch, we kick off about 1,500 tests and it takes about 20 to 25 minutes, depending on errors, test failures. On that check-in to Team City, we use a stack with SpecFlow and SpecFlow+ Runner kicks off our tests.

As I said before, we’re using Boa Constrictor underneath with the Screenplay Pattern. It acts as our actor, which is controlling the Selenium Webdriver. And then the Selenium Webdriver farms out the test work in parallel to the Selenium Grid and our little nodes; it’s an AKS cluster run through all of our tests and spit out a whole bunch of great documentation. One of my favorites being the SpecFlow+ Runner report and other good logging things like Azure Log Analytics.

Jose’s WebdriverIO Project at Domino’s

Jose:

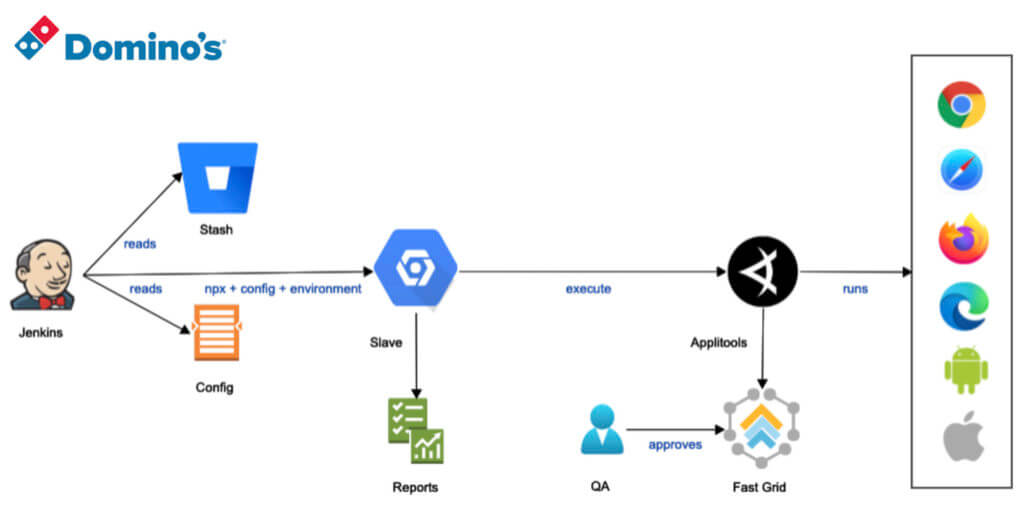

Thank you from all [of us at] Domino’s. Well, Domino’s is a big company, right? We have 4 billion dollars in sales every single year. And – only in the Super Bowl – we sell 2 million pizzas just in the United States. So we have a big product and our operations are very critical. So I decided to use WebdriverIO for our product. And this is the architecture that we created in Domino’s.

In Domino’s, we have different environments. We have QA, we have pre-production, and we have production. And for selecting different kinds of environments, we have different kinds of configuration. And in that configuration, Jenkins reads the configuration for that particular environment, we get the code from a stash, and then we are using virtual machines – it could be inside of the Domino’s network or it could be in Source Labs. Then we have a kind of report. And one thing that I really love about WebdriverIO is the fact that it’s open source, and it has huge libraries.

So in Domino’s, we’re using WebdriverIO in three projects – Domino’s website, in the Domino’s re-architecture, and in the Domino’s call center application. [In fact,] we are using WebdriverIO with two different kinds of reports, and we’re using [it] with two different kinds of BDD frameworks. One is, of course, Cucumber; that is the most used in the industry. And another one is with Mocha. And in both, we have very successful results. We have close to 200 test scenarios right now. And we are executing every single week. And we are generating reports for those specific scenarios. We have a 90% success pass rate in all scenarios.

And we’re using Applitools as an integration for discovering issues – visual issues and visual AI validations. And basically in all those scenarios, we’re validating the results in all those browsers. We’re normally using Chrome, Safari, Firefox, Edge, and of course, we’re using mobile as well, in the flavors of Android and iOS.

And the cool thing is that it really depends about what is the usage for customers, right? Most of the customers that we have are using Chrome, and we are seeing what is our market, what our customers are using, and we are validating our products in those particular customers.

So I am really happy with WebdriverIO, because I was able to use it in other problems that we were facing. For example, in Malaysia we have some stores as well, and in the previous year we had a problem with performance in Malaysia. So I was able to integrate a plugin – Lighthouse from Google – in order to measure the performance for that particular market in Malaysia using WebdriverIO. So far for us [at] Domino’s, WebdriverIO is doing great. And I am really happy with the solution and the results and the framework that we selected. And that’s everything from my end. Thank you so much.

What are their biggest challenges?

So we’ve gotten an overview of the projects these test experts are using and a little bit of their histories with their test framework of choice. This will help set a foundation to get to know our engineers and understand where they’re coming from as we continue our Cypress, Playwright, Selenium, or WebdriverIO? Let The Engineers Speak series. In the next article, we’ll cover the biggest challenges these experts face in their current test automation projects.