The AI promise

AI as a technology is not new. There are huge advancements made in the recent past in this field.

Currently it seems like AI technology is mostly about using ML (machine learning) algorithms to train models with large volumes of data and use these trained computational models to make predictions or generate some outcomes.

From a testing and test automation point-of-view, the question in my mind still remains: Will AI be able to automatically generate and update test cases? Find contextual defects in the product? Inspect code and the test coverage to prevent defects getting into production?

These are the questions I have targeted to answer in this post.

The hype

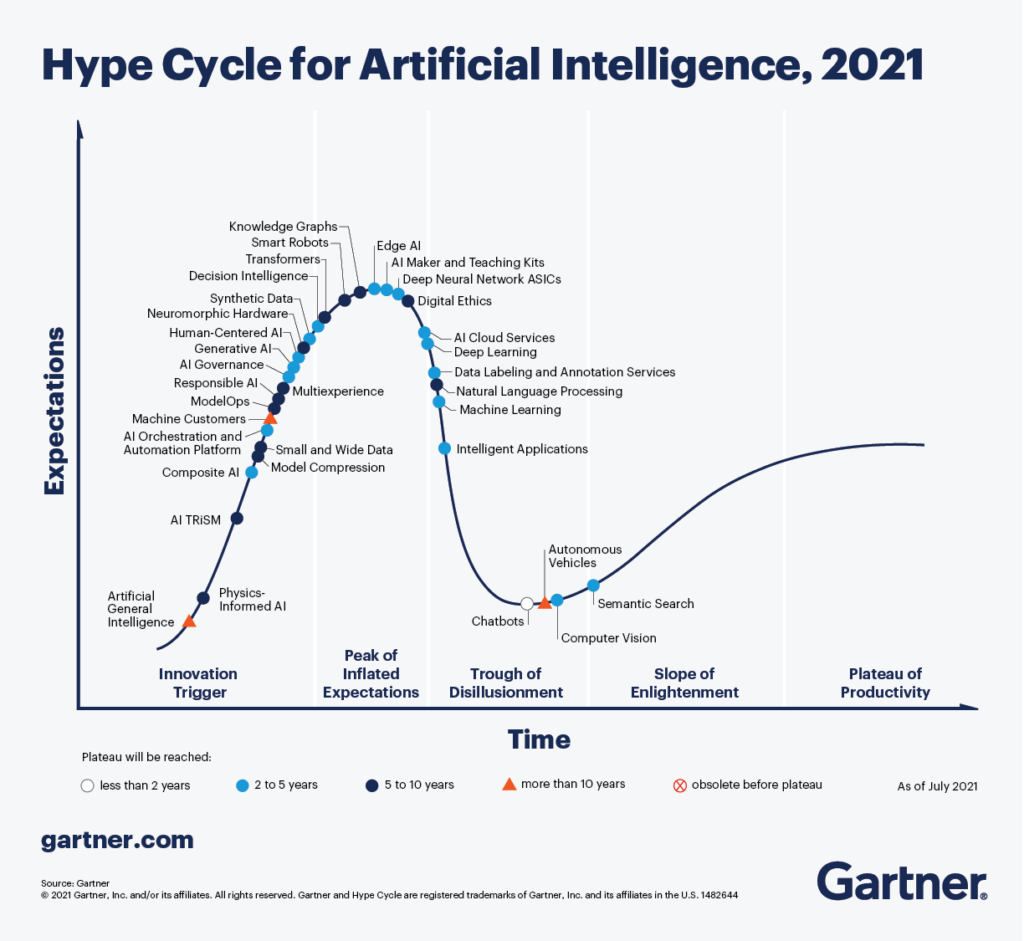

Gartner publishes reports related to the Hype Cycle of AI. You can read the detailed reports from 2019 and 2021.

The image below is from the Hype Cycle for AI, 2021 report.

Based on the report from 2021, we seem to be some distance away from the technology being able to satisfactorily answer the questions in my mind.

But there are areas where we seem to be getting out of what the Gartner report calls “Trough of Disillusionment” and moving towards the “Slope of Enlightenment”.

Based on my research in the area, I agree with this.

Let’s explore this further.

The promising future

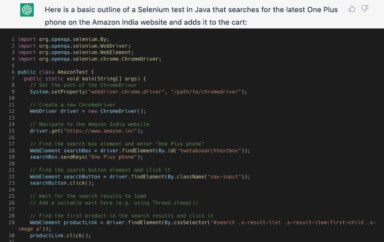

Recently I came across a lot of interesting buzz created by ChatGPT, and I had to try it out.

Given I am a curious tester by heart, I signed up for it on https://chat.openai.com/ and tried to see the kind of responses generated by ChatGPT for use cases related to test strategy and automation.

The role and value of AI in testing

I took ChatGPT for a spin to see how it could help in creating test strategy and generate code to automate some tests. In fact, I also tried creating content using ChatGPT. Look out for my next blog for the details of the experiment.

I am amazed to see how far ahead we have come in making the use of AI technology accessible to the end-users.

The answers to a lot of the non-coding questions, though generic, were pretty spot on in the responses. Like the code generated by record-and-playback tools cannot be used directly and needs to be tuned and optimized for regular usage, the answers provided by ChatGPT can be a great starting point for anyone. You would get a high-level structure, with major areas identified, which you can then detail out based on context, that only you would be aware of.

But, I often wonder what is the role of AI in Testing? Is it just a buzzword gone viral on social media?

I did some research in the area, but most of the references I found were blog posts and articles about “how AI can help make testing better”.

Here is a summary from my research on trends and hopes from AI & Testing.

Test script generation

This aspect was pretty amazing to me. The code generated was usable, though in isolation. Typically in our automation framework, we have a particular architecture we conform to while implementing the test scripts. The code generated for specific use cases will need to be optimized and refactored to fit in the framework architecture.

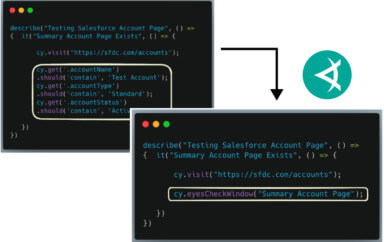

Test script generation using Applitools Visual AI

Applitools Visual AI takes a very interesting approach at this. Let me explain this in more detail.

There are 2 main aspects in a test script implementation.

- Implementing the actions/navigations/interactions with the product-under-test

- Implementing various assertions based on the above interactions to validate the functionality is working as expected

We typically only write the obvious and important assertions in the test. This itself can make the code quite complex and also affects the test execution speed.

The less important assertions are typically ignored – for lack of time, or also because they may not be directly related to the intent of the test.

For the assertions you have not added in the test script, you either need to implement different tests for validate those, or worse yet, they are ignored.

There is another category of assertions which are important and you need to implement in your test, but these are very difficult or impossible to implement in your test scripts (based on your automation toolset). Example – validating the colors, fonts, page layouts, overlapping content, images, etc. is very difficult (if at all possible) and complex to implement. For these types of validations, you usually end up relying on a human testing these specific details manually. Given the error-prone nature of manual testing, a lot of these validations are often (unintentionally) missed or ignored and the incorrect behavior of your application gets released to Production.

This is where Applitools Visual AI comes to the rescue.

Instead of implementing a lot of assertions, with Applitools Visual AI integrated in your UI automation framework, it can take care of all your functional and visual (UI and UX) assertions with a single line of code. With this single line of code, you are able to check the full screen automatically, thus exponentially increasing your test coverage.

Let us see an example of what this means.

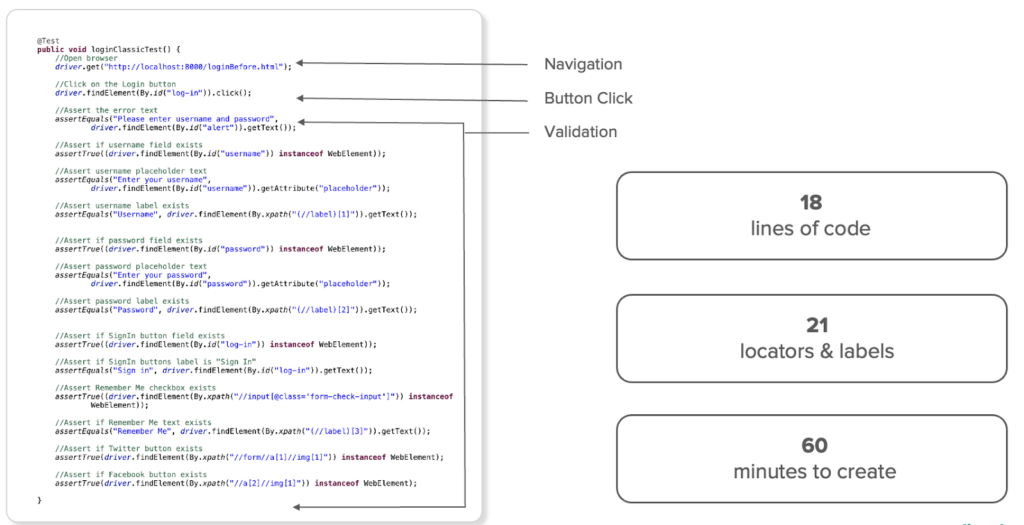

Below is a simple “invalid-login” test with assertions.

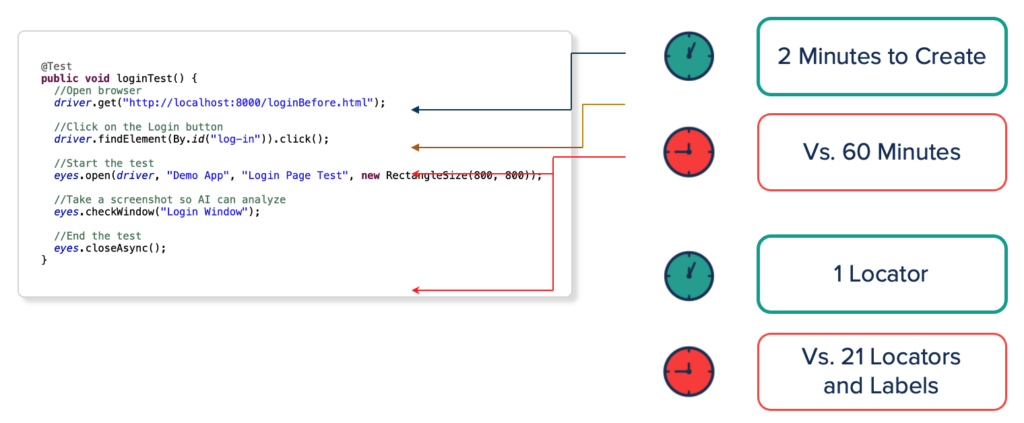

If you use Applitools Visual AI, the same test changes as below:

NOTE: In the above example with Applitools Visual AI, the lines eyes.open and eyes.closeAsync are typically called from your before and after test method hooks in your automation framework.

You can see the vast reduction in code required for the same “invalid-login” test as compared between the regular assertion-based code we are used to writing, versus using Applitools Visual AI. Hence, the time to implement the test has improved significantly.

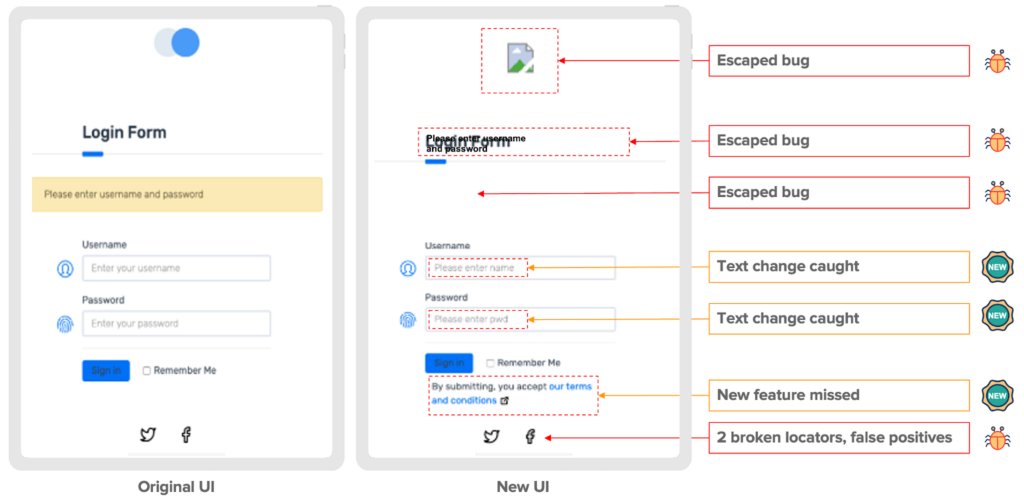

The true value is seen when the test runs between different builds of your product which has changes in functionality.

The standard assertion-based test will fail at the first error it encountered. Whereas the test with Applitools is able to highlight all the differences between the 2 builds, which include:

- Broken functionality – bugs

- Missing images

- Overlapping content

- Changed/new features in the new UI

All the above is done without having to rely on locators, which could have changed as well, and caused your test to fail for a different reason. It is all possible because of the 99.9999% accurate AI algorithms provided by Applitools for visual comparison.

Thus, your test coverage has increased, and it is impossible for bugs to escape your attention.

The Applitools AI algorithms can be used in any combination as appropriate to the context of your application to get the maximum validation as possible.

Based on the data from the survey done on “The impact of Visual AI on Test Automation”, we can see below the advantages of using Applitools Visual AI.

Visual AI: The Empirical Evidence

5.8x faster

Visual AI allows tests to be authored 5.8x faster compared to the traditional code-base approach.

5.9x more efficient

Test code powered by Visual AI increases coverage via open-ended assertions and is thus 5.9x more efficient per line of code.

3.8x more stable

Reducing brittle locators and labels via Visual AI means reduced maintenance overhead.

45% more bugs caught

Open-ended assertions via Visual AI are 45% more effective at catching bugs.

Increasing test coverage

AI technology is getting pretty good in suggesting test scenarios and test cases.

There are many products that are already using AI technology under the hoods to provide valuable features to the users.

Increasing test coverage using Applitools Ultrafast Test Cloud

A great example of an AI-based tool is the Applitools Test Cloud which allows you to scale the test execution seamlessly without having to run the tests on other browsers and devices.

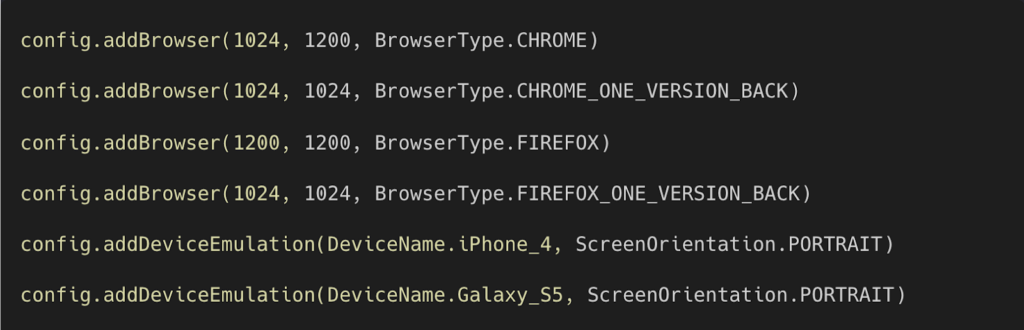

In the image below, you can see how easy it is to scale your web-based test execution across the devices and browsers of your choice as part of the same UI test execution using the Applitools Ultrafast Grid.

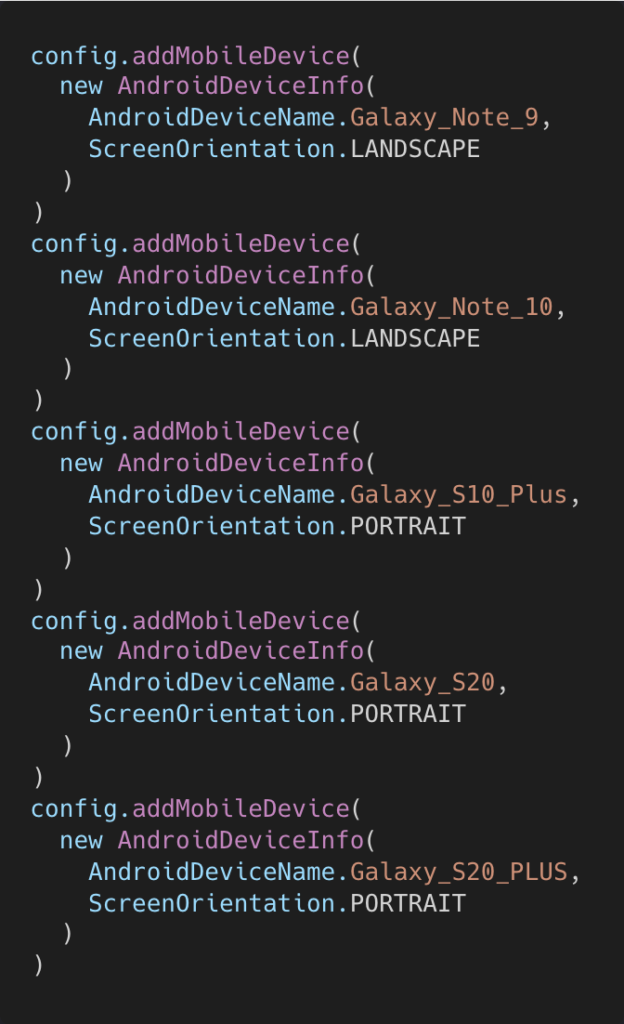

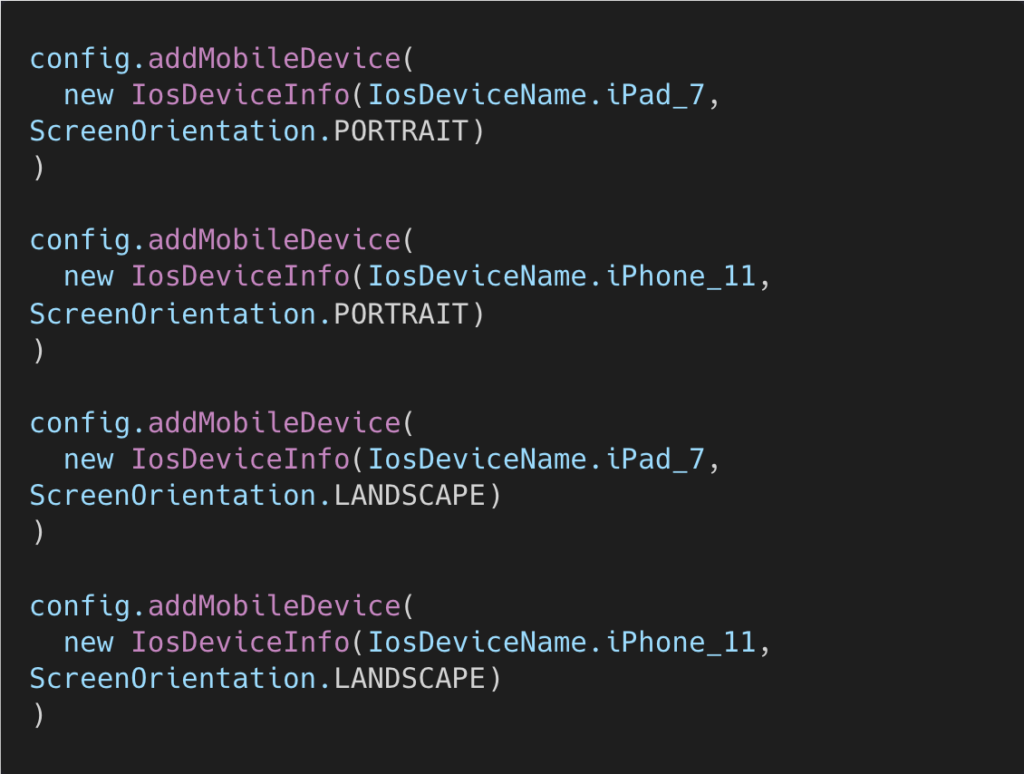

Similarly you can use the Applitools Ultrafast Native Mobile Grid for scaling the test execution for your iOS and Android native applications as shown below:.

Example of using Applitools Ultrafast Native Mobile Grid for Android apps:

Example of using Applitools Ultrafast Native Mobile Grid for iOS apps:

Test data generation

This is an area that is still to get better. My experiments showed that while actual test data was not generated, it indicated all the important areas we need to think about from a test data perspective. This is a great starting point for any team, while ensuring no obvious area is missed out.

Debugging

We use code quality checks in our code-base to ensure the code is of high quality. While the code quality tool itself usually provides suggestions on how to fix the flagged issues, there are times when it may be tricky to fix the problem. This is an area where I got lot of help in fixing the problem.

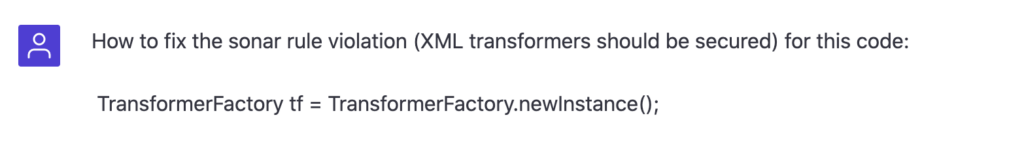

Example: In one of my projects, I was getting a sonar error related to “XML transformers should be secured”.

I asked ChatGPT this question:

The solution to the problem was spot-on and I was immediately able to resolve my sonar error.

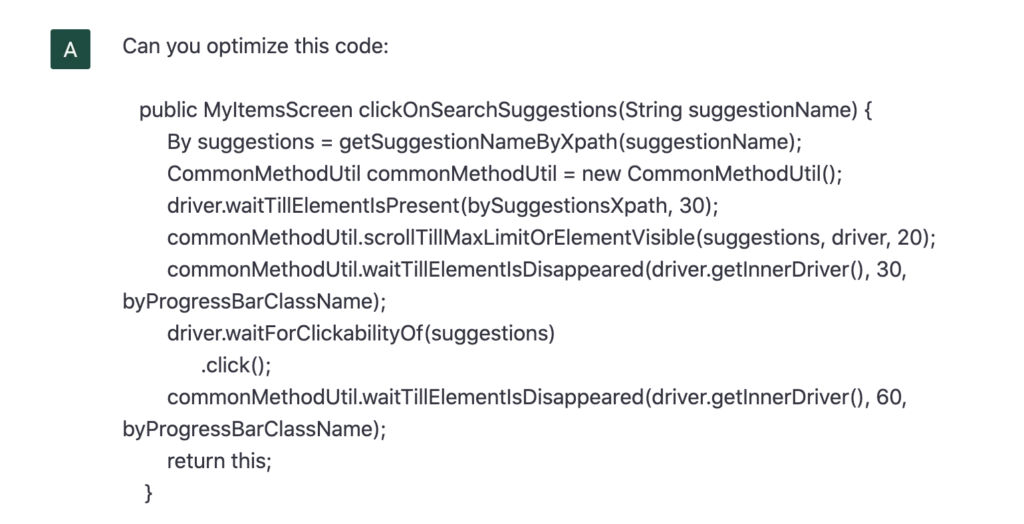

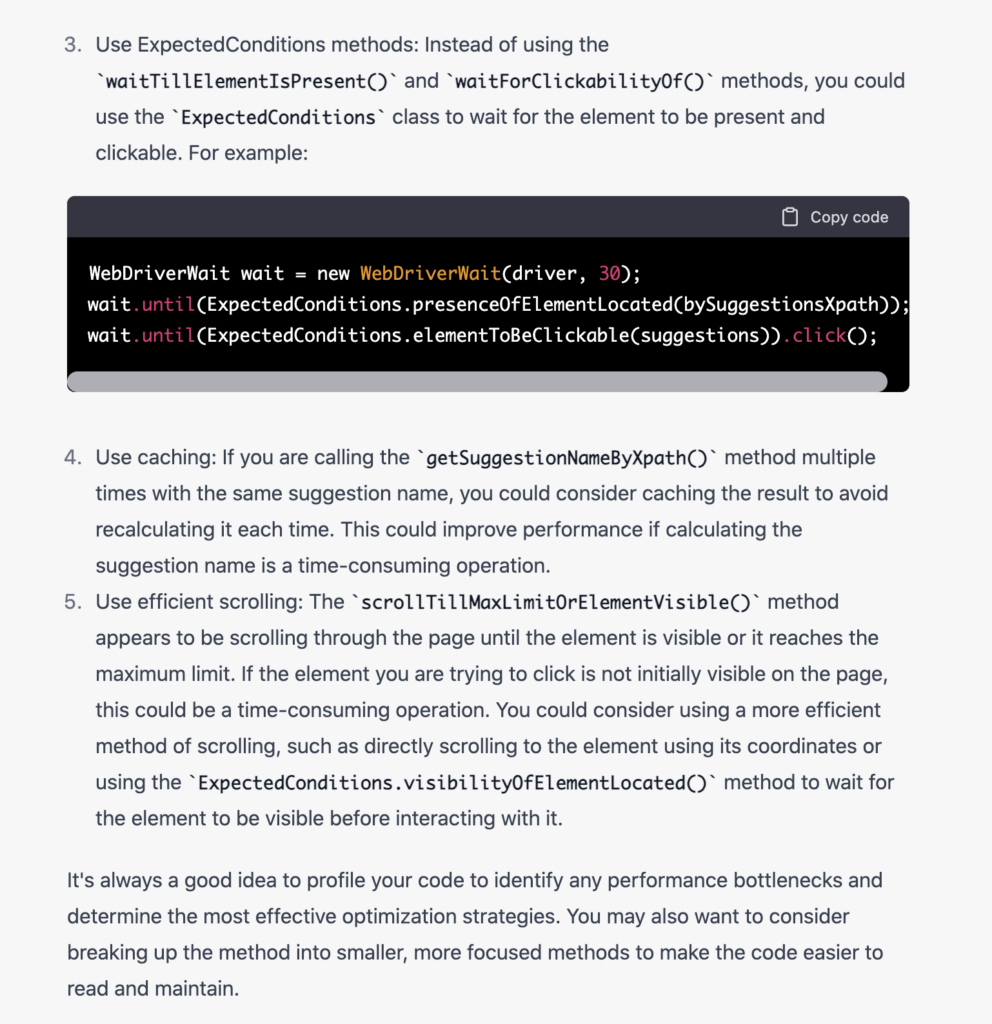

Code optimization and fixing

Given a block of code, I was able to use ChatGPT to suggest a better way to implement that code, without me providing any context of my tech stack. This was mind-blowing!

Here is an example of this:

There were 5 very valuable suggestions provided by ChatGPT. All of these suggestions are very actionable, hence very valuable!

Analysis and prediction

This is a part I feel tools are currently still limited. There is an aspect of product context, learning, and using the product and team-specific data to come up with very contextual analysis and eventually get to the predictive and recommendations stage.

What other AI tools exist?

While most of the examples are based on ChatGPT, there are other tools also in the works from a research perspective, and also application perspective.

Here are some interesting examples and resources for you to investigate more in the fascinating work that is going on.

- Galactica: Meta’s Answer to GPT-3 for Science

- Sparrow: Improving alignment of dialogue agents via targeted human judgements

- GODEL (Grounded Open Dialogue Language Model): Large-Scale Pre-training for Goal-Directed Dialog

- DialoGPT: Toward Human-Quality Conversational Response Generation via Large-Scale Pretraining

- BERT (Bidirectional Encoder Representations from Transformers): Pre-training of Deep Bidirectional Transformers for Language Understanding

- Pathways Language Model (PaLM): Scaling to 540 Billion Parameters for Breakthrough Performance

- Pathways: A next-generation AI architecture

- Using DeepSpeed and Megatron to Train Megatron-Turing NLG 530B, A Large-Scale Generative Language Model

- Scaling Language Models: Methods, Analysis & Insights from Training Gopher

- LaMDA: Towards Safe, Grounded, and High-Quality Dialog Models for Everything

- More Efficient In-Context Learning with GLaM

- BlenderBOT: Multi-Modal Open-Domain Dialogue

- Amazon’s Alexa Teacher Models

- Whisper: That approaches human level robustness and accuracy on English speech recognition

- DALL-E: Generate images

While the above list is not exhaustive, it is more focused in the area of research and development.

There are tools that leverage AI and ML already providing value to users. Some of these tools are:

- Applitools Visual AI

- QuillBot’s AI-powered paraphrasing tool to enhance your writing

- Out of office email generator

- Viral Post Generator

Testing AI systems

The tester in me is also thinking about another aspect. How would I test AI systems? How can I contribute to building and helping the technology and tools move to the Slope of Enlightenment in Gartner’s Hype Cycle? I am very eager to learn and grow in this exciting time!

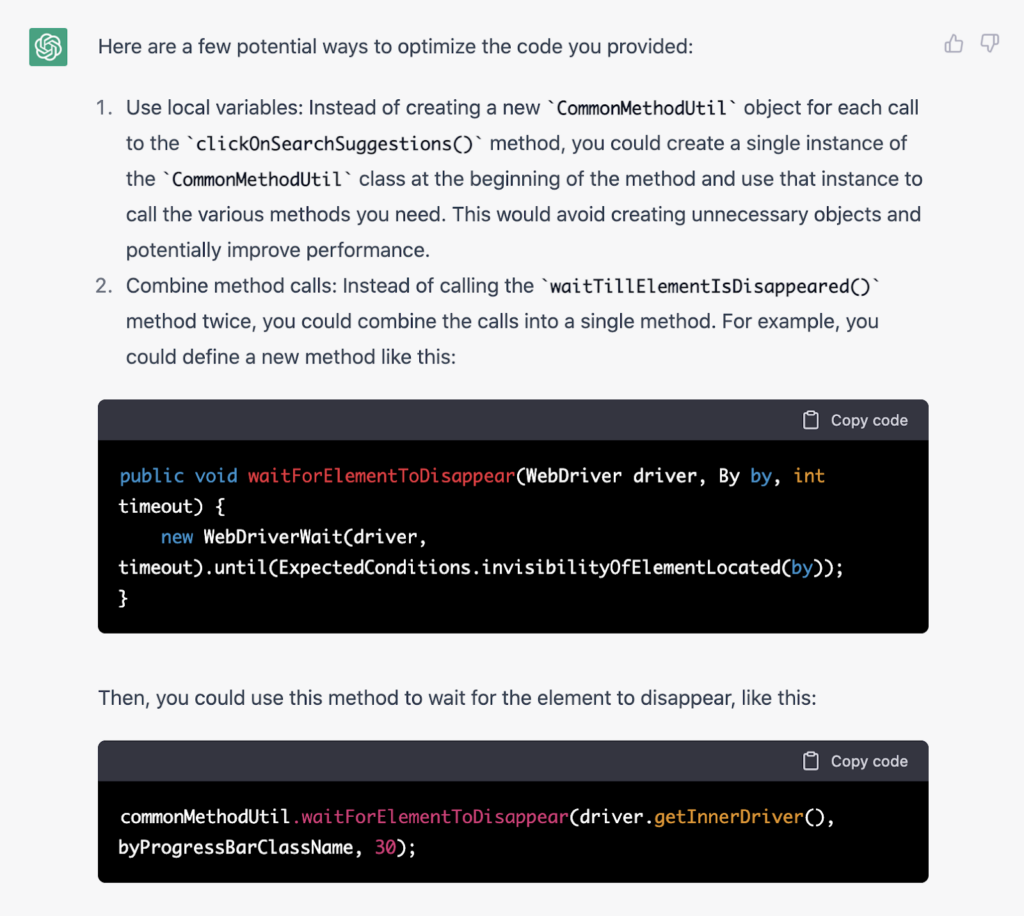

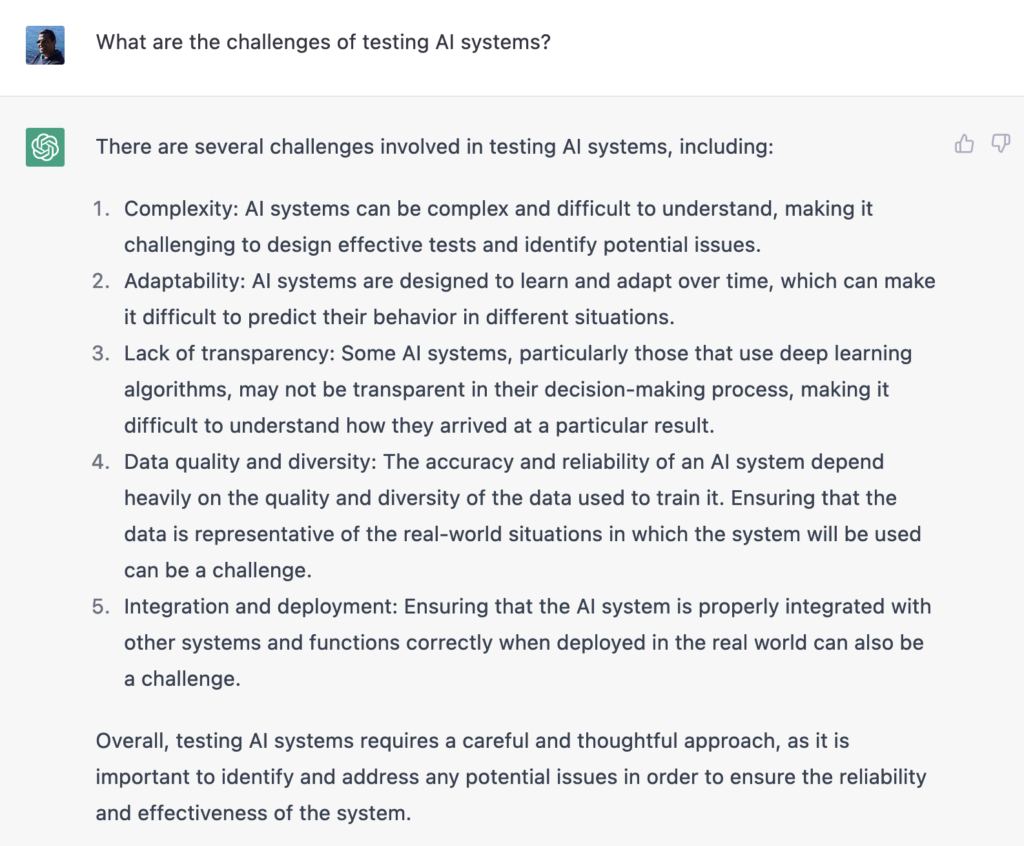

Challenges of testing AI systems

Given I do not have much experience in this (yet), there are various challenges that come to my mind to test AI systems. But by now I am getting lazy to type, so I asked ChatGPT to list out the challenges of testing AI systems – and it did a better job at articulating my thoughts … almost felt like it read my mind.

This answer seems quite to the point, but also generic. In this case, I also do not have any specific points I can think of, given my lack of knowledge and experience in this field. So I am not disappointed.

Why generic? Because I have certain thoughts, ideas, and context in my mind. I am trying to build a story around that. ChatGPT does not have an insight into “my” thoughts and ideas. It did a great job giving responses based on the data it was trained on, keeping the complexity, adaptability, transparency, bias, and diversity in consideration.

For fun, I asked ChatGPT: “How to test ChatGPT?”

The answer was not bad at all. In fact, it gave a specific example of how to test it. I am going to give it a try. What about you?

What’s next? What does it mean for using AI in testing?

There is a lot of amazing work happening in the field. I am very happy to see this happen, and I look forward to finding ways to contribute to its evolution. The great thing is that this is not about technology, but how technology can solve some difficult problems for the users.

From a testing perspective, there is some value we can start leveraging from the current set of AI tools. But there is also a lot more to be done and achieved here!

Sign up for my webinar Unlocking the Power of ChatGPT and AI in Testing: A Real-World Look to learn more about the uses of AI in testing.

Learn more about visual AI with Applitools Eyes or reach out to our sales team.